/TL;DR

For all the advancements in vulnerability remediation, one of the most fundamental challenges remains unsolved: knowing what to fix first. And according to the 2025 Remediation Operations Report, it’s still not where it needs to be.

In fact, difficulty prioritizing vulnerabilities ranks as the third biggest challenge security teams face when managing vulnerabilities. That’s not just an operational inconvenience, it’s a signal that something core to the remediation process is broken.

Prioritization sits at the intersection of risk, context, and action. It determines which vulnerabilities require immediate attention, which ones can wait, and which are low-risk enough to justify leaving unaddressed – avoiding unnecessary strain on already stretched teams. When it’s done well, prioritization drives speed, clarity, and alignment. When it’s not, everything else – collaboration, noise reduction, even automation – starts to unravel.

In this post, we’ll dig into why prioritization remains such a weak point for many organizations, how it connects to other challenges uncovered in the research, and what security leaders can do to fix it.

Why Prioritization Matters More Than Ever

While prioritization might feel like a back-end process step, it has a front-line impact across almost every part of the remediation lifecycle.

Let’s start with collaboration. Poor collaboration is the second most cited challenge when it comes to managing vulnerabilities (40%) – and is also the leading cause of remediation delays (31%). On the surface, it’s easy to attribute this to process breakdowns or team silos. But look closer, and the data points to misalignment around what matters. Specifically, misaligned priorities between teams (23%) and lack of security context for dev and ops teams (22%) are two of the top barriers to effective collaboration. When security can’t clearly communicate which vulnerabilities require attention and why, engineering teams are left in the dark about what they need to do and when, slowing remediation and breeding frustration.

Then there’s the top vulnerability management challenge: making security findings actionable (41%). That, too, traces back to ineffective prioritization. When there’s a list of hundreds or thousands of findings without any clear indicators of urgency, risk, or relevance, there’s little to act on. And it shows in execution: 16% of organizations link this very issue to remediation delays.

Underpinning all of this is the issue of noise. 90% of organizations report challenges in managing noise, which is just a prioritization challenge in disguise. Without effective risk-based filters, everything looks important. That means low-risk issues get routed alongside high-risk threats, overwhelming workflows and delaying action. The report shows that organizations experiencing high levels of noise take two additional days – on average – to remediate critical vulnerabilities.

When prioritization lacks structure and clarity, it triggers a ripple effect: findings are vague, collaboration becomes strained, and remediation slows. And unless it’s addressed, everything downstream will continue to suffer.

Common Approaches Fall Short

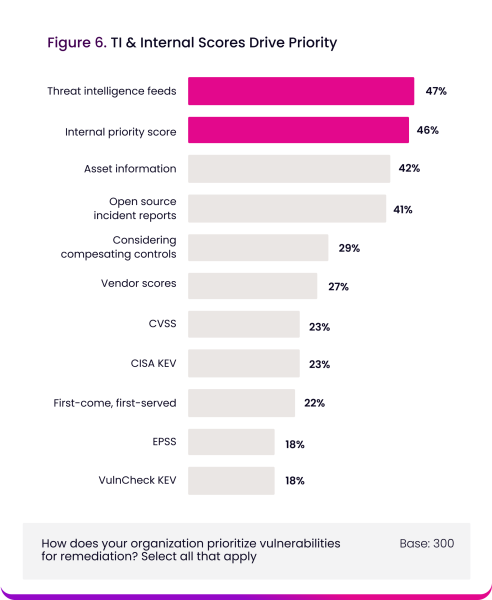

Despite the outsized impact prioritization has on the success of vulnerability remediation, most organizations continue to rely on approaches that fall short. The report reveals that the most commonly used methods are threat intelligence feeds (47%) and internal priority scores (46%). These approaches certainly have value, especially when tailored to an organization’s specific environment, but they’re loosely structured and disconnected from broader risk signals.

More surprisingly, 22% of organizations still use a “first come, first served” model to determine what gets fixed first. It’s one of the most arbitrary approaches available, and it was rated as the least effective method in the report. Yet it’s still in use nearly as often as some of the industry’s most validated frameworks. That’s more than just a procedural flaw, it points to a widespread gap in maturity when it comes to risk-based decision-making.

The Structured Models That Get Prioritization Right

While many organizations still rely on loosely defined methods, the report highlights three structured prioritization models that stand out for their effectiveness:

VulnCheck KEV (98% effective)

VulnCheck’s Known Exploited Vulnerabilities (KEV) catalog aggregates real-time exploit intelligence to identify common vulnerabilities and exposures (CVEs) actively leveraged in the wild. It enriches vulnerability metadata with operational signals – such as exploitation evidence from malware campaigns, open-source proof-of-concept code, and exploitation kits – enabling security teams to prioritize based on current attacker behavior, not theoretical severity.

EPSS (96% effective)

The Exploit Prediction Scoring System (EPSS) applies statistical modeling to estimate the likelihood that a vulnerability will be exploited in the next 30 days. Unlike traditional severity metrics, EPSS uses a continuously updated dataset of exploit activity and vulnerability characteristics to provide probabilistic, data-driven prioritization aligned with emerging threat trends.

CISA KEV (96% effective)

CISA’s Known Exploited Vulnerabilities (KEV) catalog is a curated list of vulnerabilities with confirmed exploitation in the wild, maintained by the U.S. government. It reflects real-world adversary activity and serves as a high-confidence source for identifying vulnerabilities that require immediate remediation due to their active use in attacks.

What sets these models apart from more commonly used approaches – like threat intelligence feeds and internal priority scores – is the nature of the data they rely on and the structure of their methodology. VulnCheck KEV, EPSS, and CISA KEV use externally sourced, independently maintained datasets that reflect either observed exploitation in the wild, predictive exploitation likelihood, or verified inclusion in active threat campaigns.

These models take a more evidence-based approach to prioritization, helping teams move beyond severity and context alone to focus on what’s actually being targeted – or most likely to be. Though each model functions differently, they all offer a structured, scalable way to filter signals from noise using intelligence that’s grounded in attacker behavior, not just assumptions of risk.

It’s no surprise, then, that respondents rated them as the most effective methods available. But as the data also shows, usage remains low: only 18% of organizations use EPSS and VulnCheck KEV, and just 23% use CISA KEV. That gap between effectiveness and adoption is striking and worth reconsidering.

Why Adoption Lags

Before exploring how to close the gap between effectiveness and adoption, it’s important to understand why so many organizations haven’t embraced structured prioritization models, despite acknowledging their value.

In many cases, the barrier isn’t resistance – it’s awareness. Frameworks like EPSS or VulnCheck KEV are still relatively new, and unlike internal scoring methods or threat feeds, they often require a deeper understanding of external risk modeling and how to integrate that data into existing workflows. Without clear guidance or support for operationalizing these models, they remain underutilized, even when they’re accessible.

Integration is another hurdle. Many security teams rely on legacy processes or fragmented toolsets that weren’t built with structured external models in mind. Introducing new data sources into remediation workflows, ticketing queues, or dashboards can feel disruptive, especially when teams are already overloaded. If a model doesn’t fit neatly into an established process, it’s often deprioritized, regardless of its value.

Then there’s also a perception issue. Some teams assume that models developed outside the organization won’t account for internal nuances, like asset criticality, compensating controls, or unique threat profiles. As a result, they default to internal scoring systems they can control and customize, even if those systems lack consistency or real-world relevance.

These barriers don’t reflect a lack of value, they reflect a lack of operational fit. Addressing them requires a deliberate shift in how teams adopt, apply, and integrate prioritization into the way remediation actually gets done.

Making Structured Prioritization Operational

Bridging the gap between effectiveness and adoption means addressing the very reasons these models have been slow to take hold. It’s not for lack of value, but because teams haven’t had a clear path to operationalize them. Here’s what that path can look like:

- Blend external models with internal context – don’t choose one over the other.

- One of the reasons teams default to internal scoring is familiarity. Asset information, business impact, and internal SLAs are deeply embedded in existing workflows – and they matter. But they’re only part of the picture. External models like EPSS and KEVs add real-world exploitation signals that internal logic can’t provide. These models aren’t meant to replace internal scoring; they’re meant to enhance it. In fact, threat intelligence feeds and asset information were rated 96% and 95% effective, respectively, in the report, underscoring that internal and external signals can – and should – work together to drive better decisions.

- Integrate prioritization models into where work actually happens.

- If prioritization signals are buried in a dashboard or PDF report, they’re easy to ignore. Teams need to embed these models directly into remediation workflows. Making risk intelligence visible at the decision point turns it from a reference into a trigger for action.

- Build confidence through education and context – not just data.

- Models like EPSS or CISA KEV take a different approach than traditional internal scoring, and that shift can raise questions around how to interpret the signals or act on them in real-world scenarios. Bridging this gap starts with making these models more transparent through enablement, training, and documentation that demystifies how they work and fit into your unique operational context. When teams understand why a score matters, they’re more likely to act on it.

Moving forward means embedding structured models into real-world workflows and aligning them with the way teams already think and work. That’s how prioritization shifts from theoretical to operational and starts making a measurable impact on remediation velocity.

Final Thoughts

Prioritization may not be the most visible part of the vulnerability management process, but it’s one of the most decisive. When it’s structured, risk-informed, and embedded into real workflows, everything downstream moves faster and with more clarity. When it’s not, the result is noise, delays, and misaligned effort.

The models are there. The data backs them. What’s needed now is adoption that fits the way teams actually work, layered with internal context, built into decision points, and supported with clear guidance.

Key takeaways:

- Prioritization challenges drive delays, noise, and collaboration issues.

- Structured models like EPSS, VulnCheck KEV and CISA KEV are some of the most effective, but underused.

- Prioritization should blend internal and external signals.

- Operationalizing models requires integration, context, and enablement.

Stay updated on Seemplicity blog

Subscribe today to stay informed and get regular updates from Seemplicity.