/TL;DR

AI is changing how attacks happen – and how fast they happen. Our 2026 State of Exposure Management report shows why most security teams aren’t struggling to find risk, but to fix it quickly enough. Based on insights from 300 security leaders, it highlights where remediation breaks down, how AI is being used today, and why execution is becoming the real bottleneck.

There’s a lot of conversation right now about AI in cybersecurity, and most of it focuses on how defenders are using it for better prioritization, more automation, less manual work. That’s all happening, and it’s valuable. But it’s also only half the story.

What’s changing just as quickly, if not faster, is how attackers are operating. AI is making it easier to automate reconnaissance, identify weak points, and move from discovery to exploitation much faster than before. That shift puts pressure on something security teams have quietly struggled with for years: execution. Not finding issues, but actually fixing them in time.

AI didn’t create the problem. It exposed it.

One of the clearest takeaways from our 2026 State of Exposure Management report is that most organizations aren’t struggling with visibility anymore. In fact, they have the opposite problem.

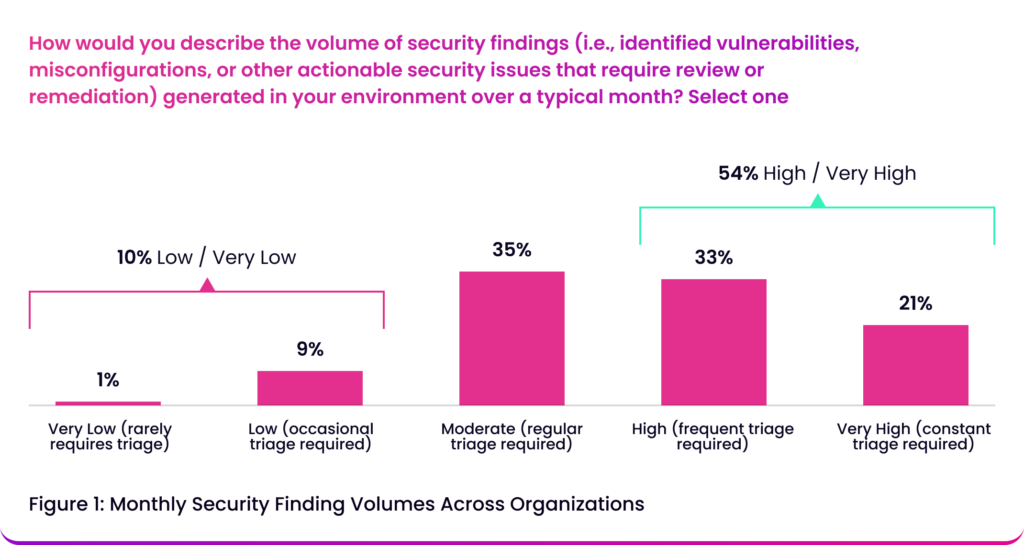

Over half of the security leaders we surveyed (54%) describe their environment as consistently high-volume, meaning they’re dealing with a constant influx of findings that doesn’t really slow down.

The backlog numbers reflect that reality. Sixty-one percent of respondents say at least a quarter of their findings remain unresolved, and for a significant portion, more than half are still open. At that point, the issue isn’t whether teams are identifying the right risks, it’s whether their remediation model can keep up with the scale of what they’re identifying.

The real bottleneck is coordination

Where the gap really shows up is in execution.

A lot of organizations have already automated parts of the workflow. Sixty-six percent report that routing findings into ticketing systems is mostly or fully automated, which on the surface sounds like meaningful progress. But it hasn’t solved the biggest source of friction, which is coordination.

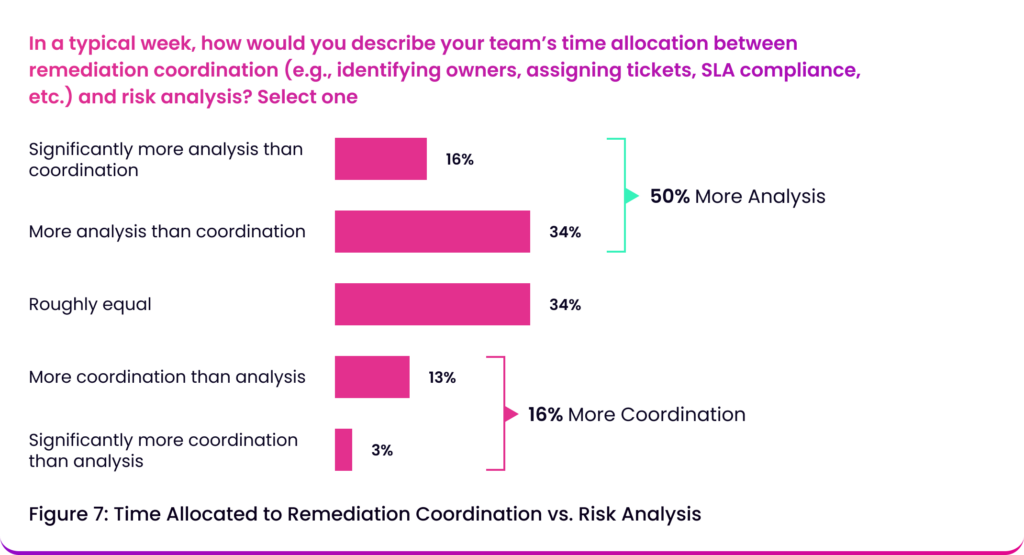

Only about half of organizations say they spend more time on risk analysis than on coordinating remediation work. The rest are spending equal time – or more – on things like figuring out ownership, aligning across teams, and following up on progress.

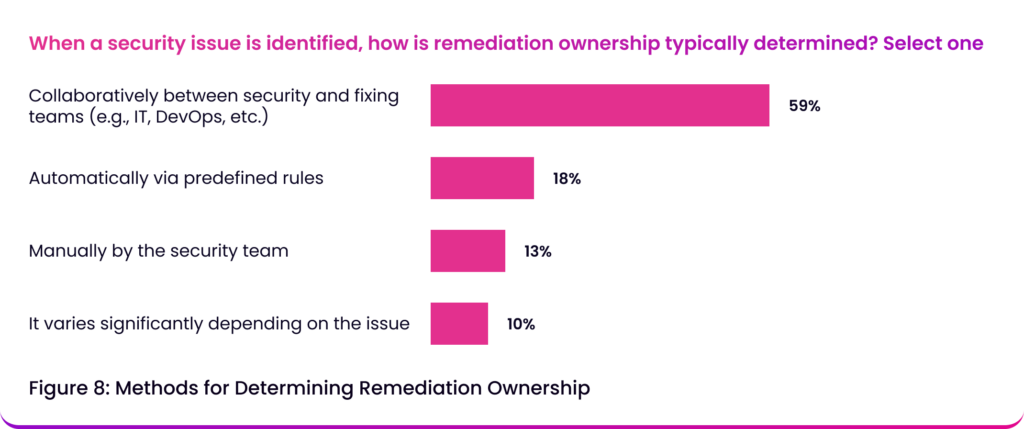

That’s largely because ownership is still being determined manually. Only 18% of organizations assign remediation ownership automatically, while the majority (59%) rely on collaboration to decide who is responsible for fixing something. That model doesn’t scale well when you’re dealing with thousands of findings, and it turns coordination into a significant part of the workload.

AI is widely adopted, but not fully trusted

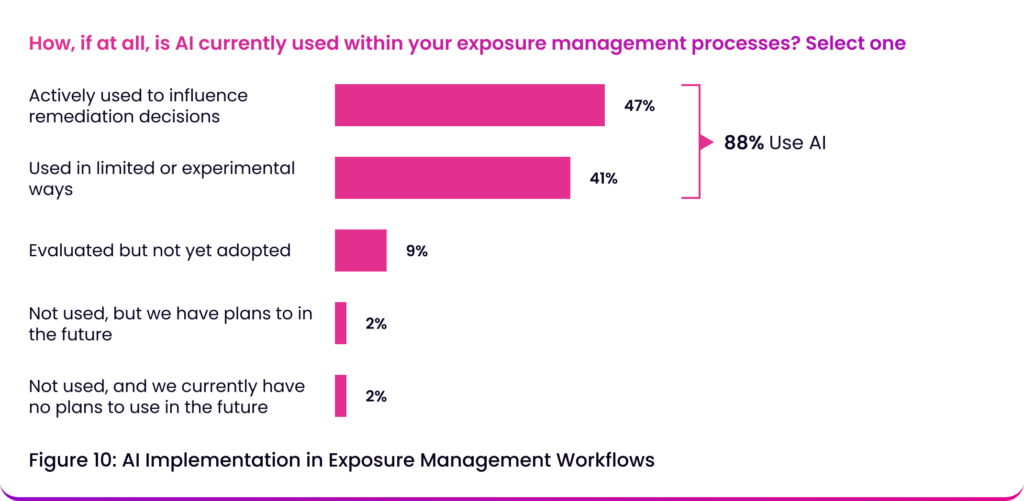

This is where AI is often expected to step in. And to some extent, it already has. Eighty-eight percent of organizations report using AI in their exposure management workflows in some capacity. It’s particularly common in areas like prioritization, data aggregation, and tracking remediation progress. But there’s a clear boundary in how far teams are willing to rely on it.

While most leaders are comfortable using AI to inform decisions, only 31% say they fully trust AI-driven recommendations without human oversight. That hesitation means AI is primarily acting as a support layer rather than an autonomous one. It helps teams move faster, but it doesn’t fundamentally change how decisions are made or how work gets executed.

The gap is becoming more risky

On its own, none of this is new. Security teams have been dealing with backlog, coordination challenges, and inconsistent processes for years. What’s changed is the speed of the threat landscape.

When attackers are leveraging AI to accelerate how quickly they can identify and exploit vulnerabilities, delays in remediation become more consequential. The gap between detection and resolution isn’t just an operational inefficiency, it’s a growing source of risk.

That makes execution speed far more important than it used to be. It’s no longer enough to know where your exposures are. You need to be able to act on them quickly and consistently.

We’re still measuring activity, not impact

Another challenge is how teams define success. Most organizations are tracking metrics like time to remediation, number of findings identified, and number of findings resolved. These are useful for understanding throughput, but they don’t necessarily indicate whether risk is actually being reduced.

Fewer teams are prioritizing metrics that reflect changes in exposure over time. At the same time, 94% of leaders say they’re confident in how they communicate progress to the business, even though 43% acknowledge that their internal processes are still inconsistent or ad hoc.

That creates a disconnect where reporting is improving faster than execution.

This is an execution problem

The industry has largely solved for visibility. What it hasn’t solved is how to operationalize remediation at scale.

And AI is becoming crucial to addressing this challenge.

It’s not just there to help teams understand their environment faster. That part is already happening. The real value is in removing the friction that’s slowing execution down in the first place.

The report reveals that, right now, that friction is still very human. Ownership needs to be figured out, work needs to be routed and followed up on, and teams need to align before anything actually gets fixed. All of that takes time, time that didn’t matter as much when everything moved slower.

With attackers using AI to compress the time between identifying and exploiting a weakness, defenders don’t just need better insight, they need to be able to act at a similar pace. And that’s not something you get by layering AI onto analysis alone.

AI needs to be used in a way that reduces the dependency on manual coordination, to make ownership clearer, and to move work forward without the same level of back-and-forth that currently slows everything down.

Because at this point, speed isn’t just about efficiency, it’s part of the risk equation. And if execution continues to lag behind detection, that gap is only going to get more expensive to maintain.

Read the full 2026 State of Exposure Management Report here.

Stay updated on Seemplicity blog

Subscribe today to stay informed and get regular updates from Seemplicity.