/ How AI Security Agents Optimize Exposure Management

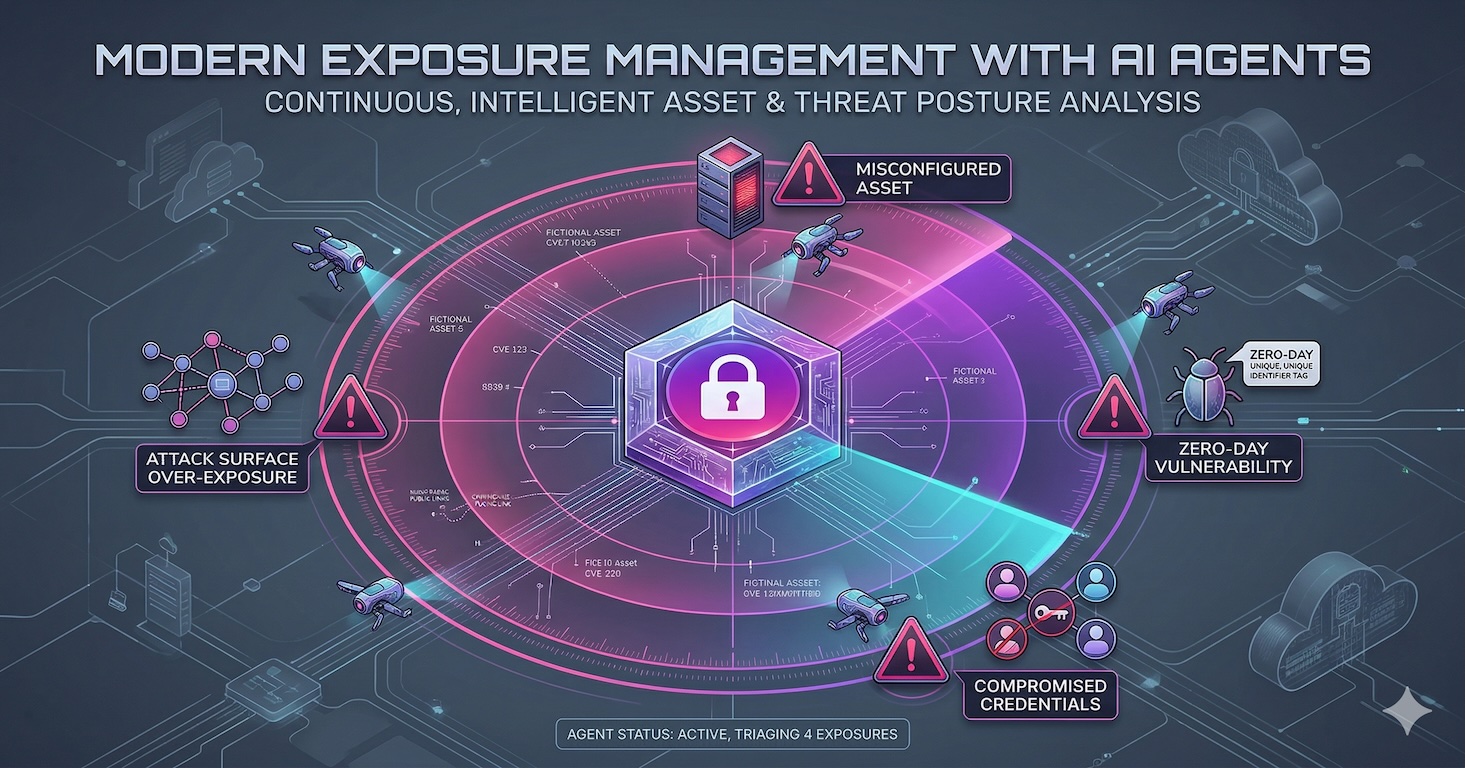

AI security agents transform exposure management by shifting teams from manual triage to autonomous defense. They bridge the gap between vulnerability discovery and resolution by automating three critical bottlenecks: contextual risk prioritization, instant ownership routing, and assisted remediation that drafts code fixes. Ultimately, this AI-driven approach eliminates repetitive tasks, cuts through alert fatigue, and drastically accelerates remediation cycles.

For decades, when people pictured the “AI-powered future”, it looked like an old school sci-fi poster; flying cars, talking robots, a machine making your morning coffee… Fast forward to today, and while the coffee part isn’t too far off, the real story of AI is a lot more practical than a car with wings (albeit, maybe less “cool”). It’s in the tools we use every day, helping us write, code, communicate, and make decisions faster.

Exposure management is no exception. The volume of vulnerabilities has exploded, stretching teams to their limits. Analysts spend hours on triage, prioritization, and coordination, just trying to keep the lights on. Much like how AI copilots have transformed software development, AI security agents can become the digital teammates that accelerate and scale defensive operations. They’re capable of reading, interpreting, and acting on security data at machine speed, enabling what’s quickly becoming known as AI-driven exposure management.

Attackers are already exploiting AI to automate reconnaissance and accelerate exploitation. To stay ahead, defenders must adapt. This cat-and-mouse game isn’t just about better detection; it’s about smarter, more autonomous action. By embracing AI-powered risk prioritization and autonomous remediation, security teams can finally close the gap between discovery and defense.

Where AI Security Agents Deliver the Most Impact

The practical applications of AI agents are already taking shape inside security programs. From deciding what to fix first to finding the right owner and even preparing the actual fix, these agents can take on some of the most repetitive, resource-draining parts of exposure management.

1. Prioritization

With so many different scoring frameworks, prioritizing security findings has always been one of the biggest pain points in exposure management. Teams find themselves buried in endless lists of “critical” issues that can’t all be fixed at once. And while the Exploit Prediction Scoring System (EPSS) is already based on machine learning, modern AI security agents can take things a few steps further. These agents can think dynamically; they can analyze findings across multiple sources, understand the relationships between them, and surface what truly matters based on context, not just on severity.

For example, most scoring systems focus solely on vulnerabilities. But what about configuration issues that don’t have a CVSS score? With AI-driven exposure management, an agent can research each finding independently, assess its potential impact, and prioritize it accordingly.

AI security agents can also factor in business context, something most existing scoring models ignore. A vulnerability on a production system that handles customer data doesn’t carry the same risk as the same issue on an internal dev server. Agents can connect those dots automatically, applying AI-powered risk prioritization to weigh the technical severity of an exposure against its real-world consequences.

And before any prioritization model can work well, teams need clean data. Triaging false positives and redundant alerts eats up valuable time. Here too, agents can help. By reading the findings, researching patterns, and comparing them against trusted sources, they can filter out the noise and leave security teams with a refined, reliable list of what truly needs attention.

2. Finding the Owner

Ask any security team what slows them down the most, and you’ll probably hear the same answer: finding out who owns what.

Every new exposure – whether it’s a vulnerable server, misconfigured cloud bucket, or outdated dependency – eventually needs to land in the hands of the person responsible for fixing it. But in large, complex environments, ownership isn’t always clear. Systems change, teams reorganize, documentation drifts out of date. What should be a five-minute task often turns into weeks of chasing down names, checking spreadsheets, and pinging people across multiple channels.

This is exactly the kind of repetitive, investigative work that AI security agents are well suited for. Instead of relying on tribal knowledge or outdated organizational charts, agents can sift through existing data sources, such as cloud configurations, asset inventories, ticketing systems, or even internal communication threads, to identify who’s most likely responsible for a given resource.

By analyzing patterns in code commits, historical tickets, and collaboration tools, agents can infer ownership and automatically suggest or assign the right person or team. It’s a subtle but powerful shift. The time saved by not having to track down every owner manually means security teams can keep findings moving instead of letting them pile up.

Within the broader practice of AI-driven exposure management, this ability to connect each issue with its rightful owner closes one of the most frustrating gaps in the remediation workflow. It also reduces friction between security and engineering teams by ensuring that findings arrive with clear, context-aware routing, not vague handoffs or mass notifications.

3. Remediation

If there’s one stage in the exposure management process that truly tests the patience of security teams, it’s remediation.

Finding issues is easy. Getting them fixed? That’s the hard part.

The tension usually starts with ownership but runs deeper. Security teams push for remediation SLAs, while developers and IT teams are focused on product deadlines, system stability, or uptime metrics. For every vulnerability ticket, there’s often a back-and-forth about risk level, business impact, or the effort needed to patch. Multiply that by thousands of findings, and the result is familiar: slow progress, long backlogs, and no one feeling particularly good about it.

This is where AI security agents can start to make a meaningful dent. They don’t just pass information along, they help make the fix itself easier, safer, and faster.

One way is through smarter context. Agents can perform quick risk assessments on the relevant patch, configuration, or dependency update and highlight potential side effects before the fix even begins. For example, they can read release notes or changelogs, identify breaking changes, and summarize what developers actually need to know. That kind of targeted insight reduces hesitation and helps the fixing team move with confidence.

Agents can also generate the groundwork for a remediation plan, listing out the steps, commands, or configuration changes needed to resolve the issue. In some cases, they can even draft the code changes or open the pull request automatically. This is where autonomous remediation starts to take shape: the ability for agents to handle repetitive or low-risk fixes end to end, freeing up engineers to focus on the complex ones that truly require human judgment.

Of course, not every fix should be automated, and not every team is ready for that level of autonomy. But by combining AI-powered risk prioritization with intelligent remediation support, organizations can finally shrink the time between identifying an issue and resolving it – a bottleneck that has held security programs back for years.

Closing the Gap Between Discovery and Action

AI isn’t here to replace security teams; it’s here to help them move faster, think smarter, and stay ahead. By offloading repetitive, manual tasks to AI security agents, teams can finally focus on strategy instead of survival.

From prioritization to ownership to remediation, this shift from automation to autonomy is what will define the next era of AI-driven exposure management.

Learn more about how Seemplicity’s AI agents are transforming exposure management here.

Stay updated on Seemplicity blog

Subscribe today to stay informed and get regular updates from Seemplicity.